Abstract

Study Site & Data Collection

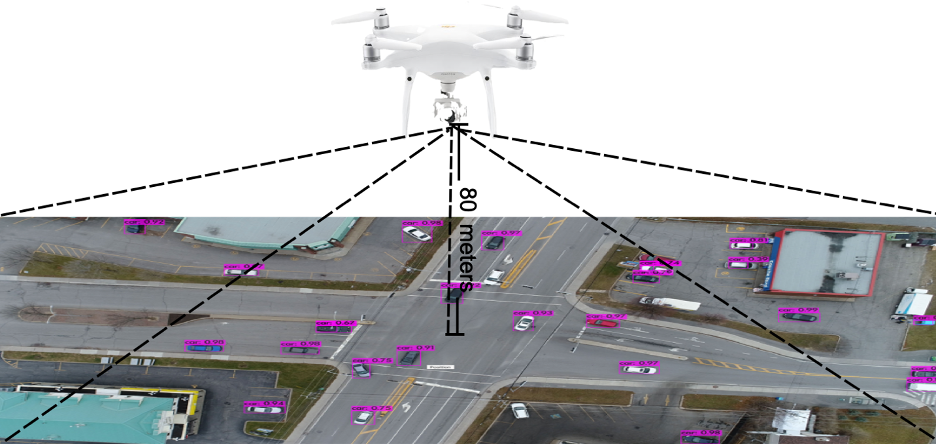

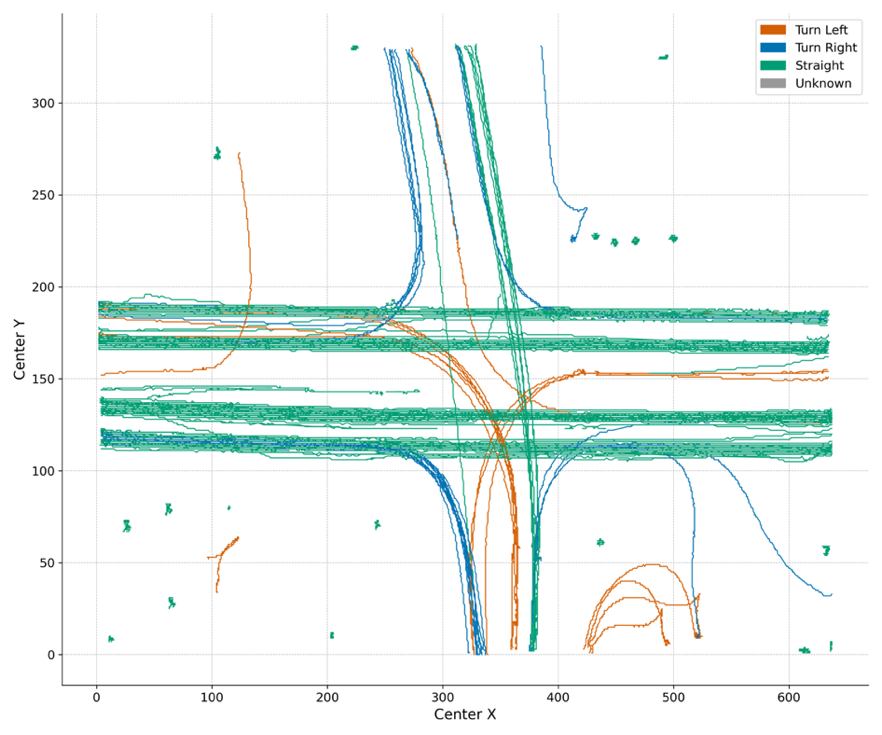

Vehicle trajectories were collected using a DJI drone hovering at 80 meters above a four-arm signalized intersection in Châteauguay, Montreal, QC. Video was captured at 30 fps during peak hours, then stabilized using a Fourier-Mellin transform to correct UAV motion artifacts.

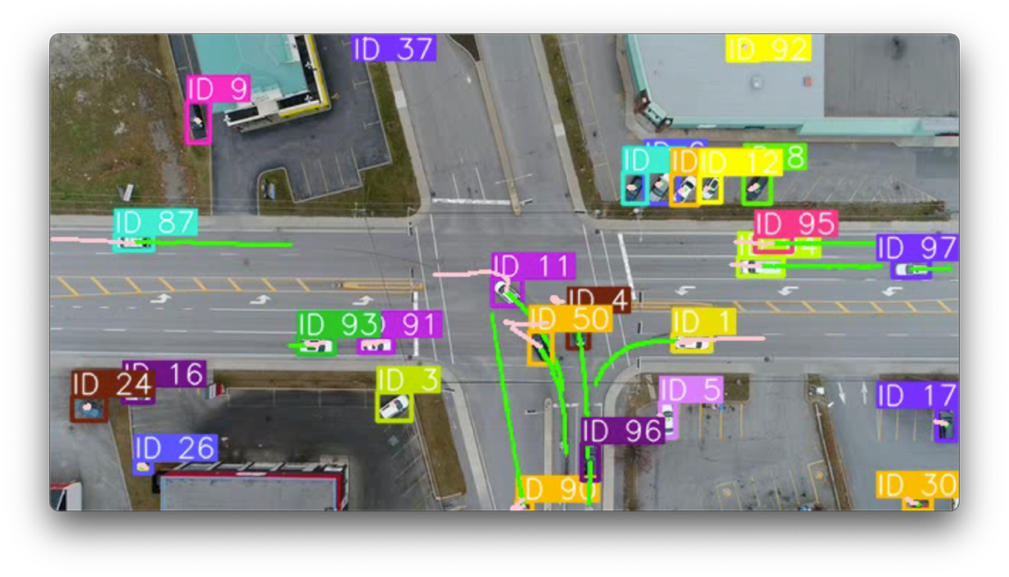

Vehicles were detected using YOLOv8 retrained on 18,000 custom-labeled images, and tracked across frames with Deep SORT. The dataset includes passenger vehicles, trucks, and buses, split into 70% training, 15% validation, and 15% test sets.

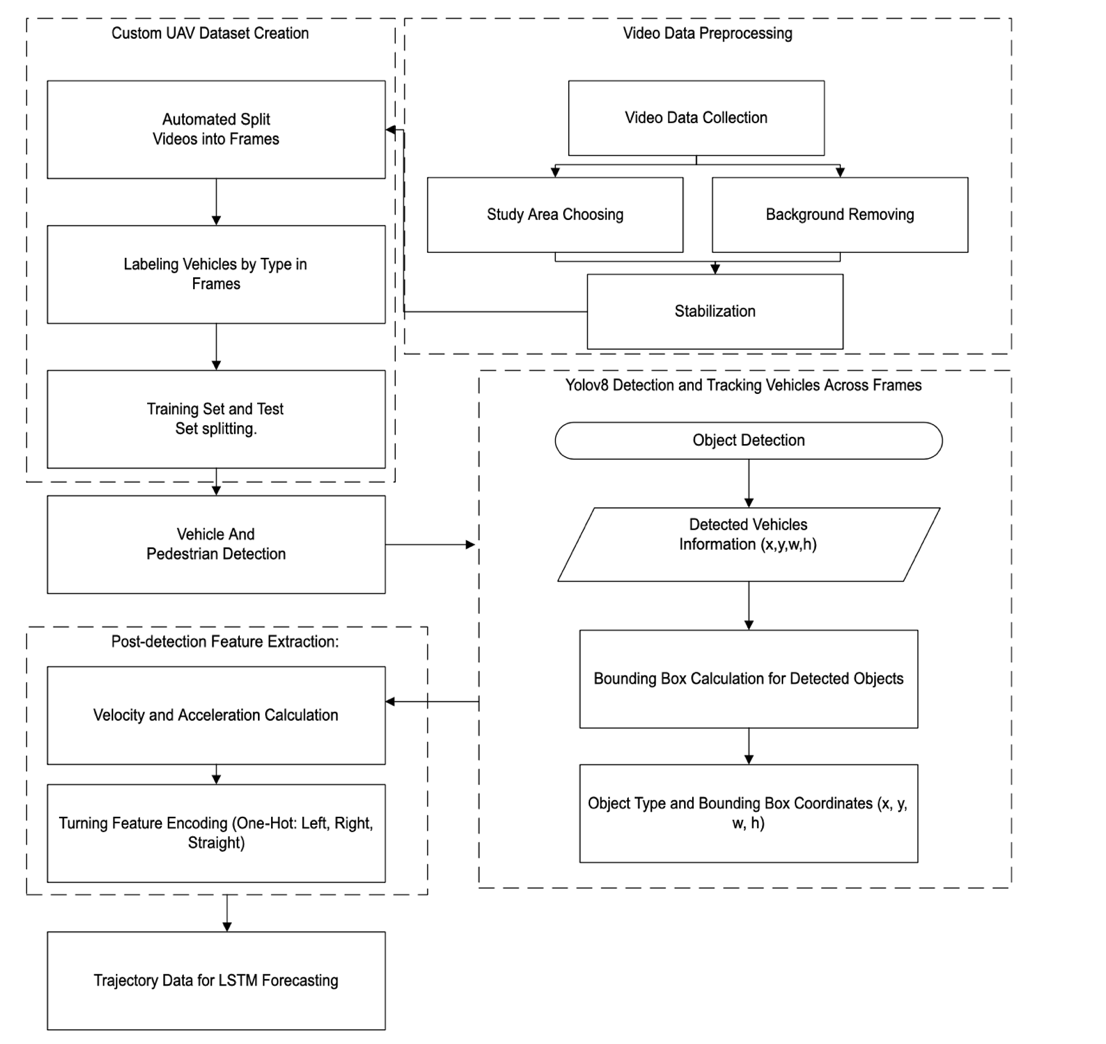

Method

Turn-Aware Feature Encoding

The key insight is that turning maneuvers are the hardest to predict, yet standard LSTMs have no explicit representation of maneuver intent. We address this with:

- Cumulative heading change — A 1-second rolling sum of instantaneous angular changes, providing a smooth signal of turning intent

- One-hot maneuver encoding — Left/right/straight classification using a 10° heading threshold, aggregated via majority vote to suppress frame-level noise

- Feature vector — Position (x, y), velocity (vx, vy), acceleration (ax, ay), plus the one-hot turn indicators at each time step

Encoder–Decoder Architecture

The Turn-Aware LSTM uses a 2-layer stacked encoder (128 hidden units) to compress the observed trajectory, then a decoder LSTM generates future positions autoregressively. The turn features are concatenated with kinematic features at the input, providing explicit maneuver context throughout the encoding process.

Results

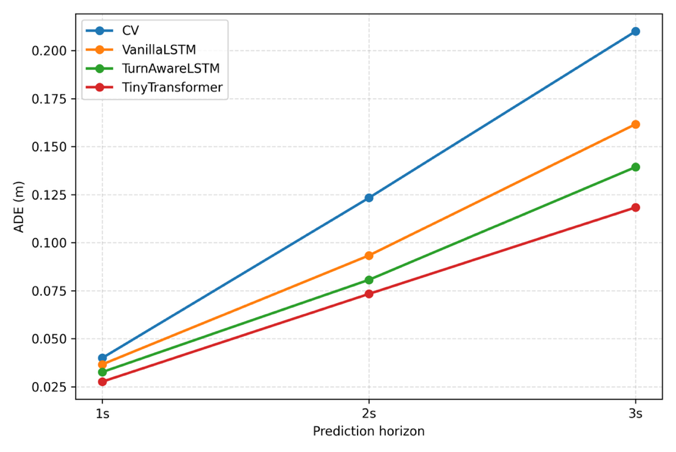

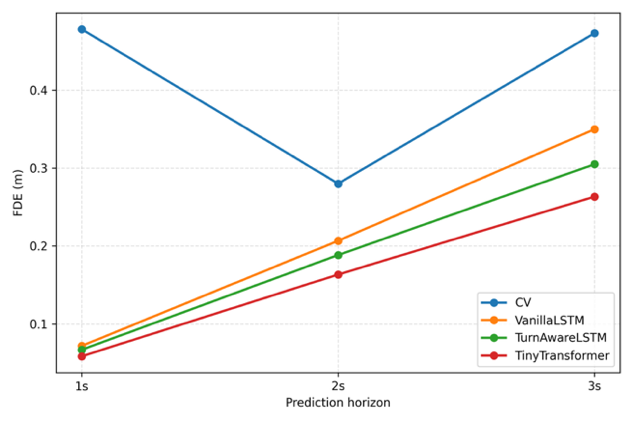

Models were evaluated at 1s, 2s, and 3s horizons (30, 60, 90 frames) against three baselines: Constant Velocity (CV), Vanilla LSTM, and a Tiny Transformer.

Overall Performance

The Tiny Transformer achieves the lowest overall errors, while the Turn-Aware LSTM consistently outperforms the Vanilla LSTM at all horizons — closing the gap toward the Transformer while adding negligible inference cost.

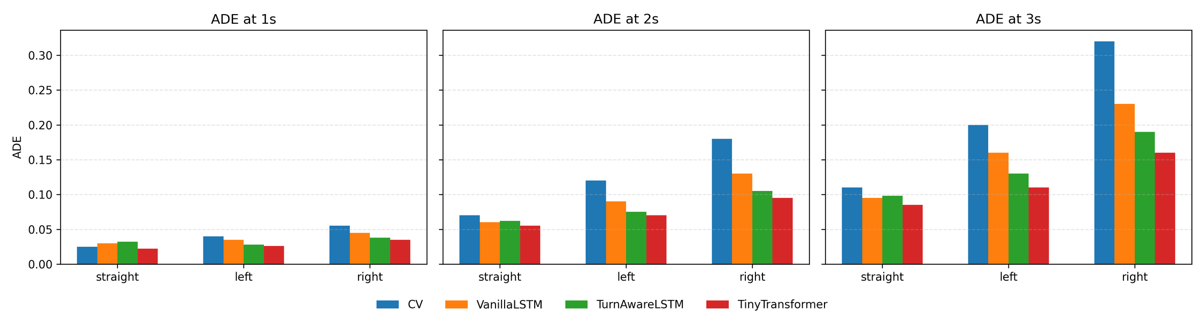

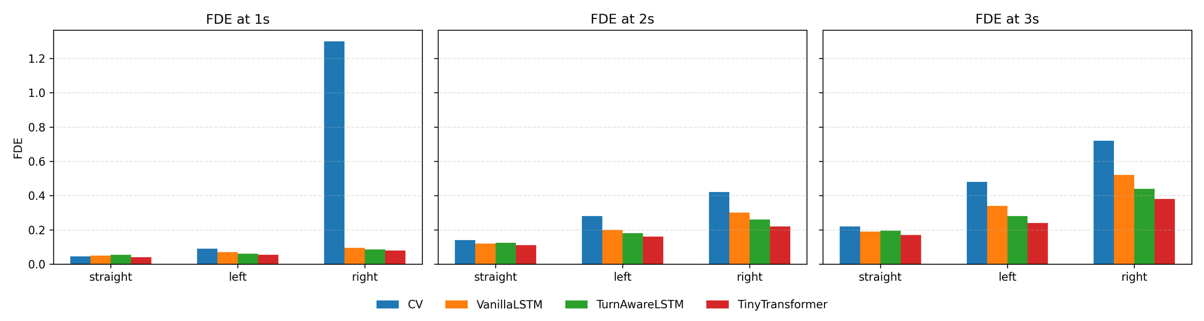

Per-Maneuver Breakdown

The targeted benefit of turn encoding is most visible in maneuver-specific analysis:

| Maneuver | Metric | Vanilla LSTM | Turn-Aware LSTM | Improvement |

|---|---|---|---|---|

| Right Turn | FDE @ 3s | ~0.51 m | ~0.42 m | ~18% |

| Left Turn | FDE @ 3s | ~0.35 m | ~0.28 m | ~20% |

| Straight | FDE @ 3s | ~0.18 m | ~0.17 m | ~6% |

Turn encoding provides the largest gains precisely where prediction is hardest: turning maneuvers.

Computational Efficiency

| Model | Inference Time | Hardware |

|---|---|---|

| Constant Velocity | < 1 ms | CPU |

| Vanilla LSTM | ~2.3 ms | RTX 4090 |

| Turn-Aware LSTM | ~2.5 ms | RTX 4090 |

| Tiny Transformer | ~4.8 ms | RTX 4090 |

The turn-aware features add only ~0.2 ms overhead vs. the vanilla LSTM, making the model fully suitable for real-time autonomous driving applications.

Code & Data

The processed trajectory datasets, maneuver annotations, and model code are publicly available:

Note: Raw video data cannot be publicly released due to privacy and data-sharing restrictions.

Acknowledgments

This work was funded by Ericsson — Global Artificial Intelligence Accelerator (GAIA) AI Hub Canada in Montréal through the Mitacs Accelerate Program.

Citation

@article{zhou2026turnaware,

title={Turn-Aware LSTM Model for Vehicle Trajectory Forecasting},

author={Zhou, Xingnan and Alecsandru, Ciprian and Bashbaghi, Saman and Jeong, Yunseo and Chen, Ye},

journal={Advances in Transportation Studies},

volume={LXVIII},

pages={381--396},

year={2026}

}