Abstract

Method

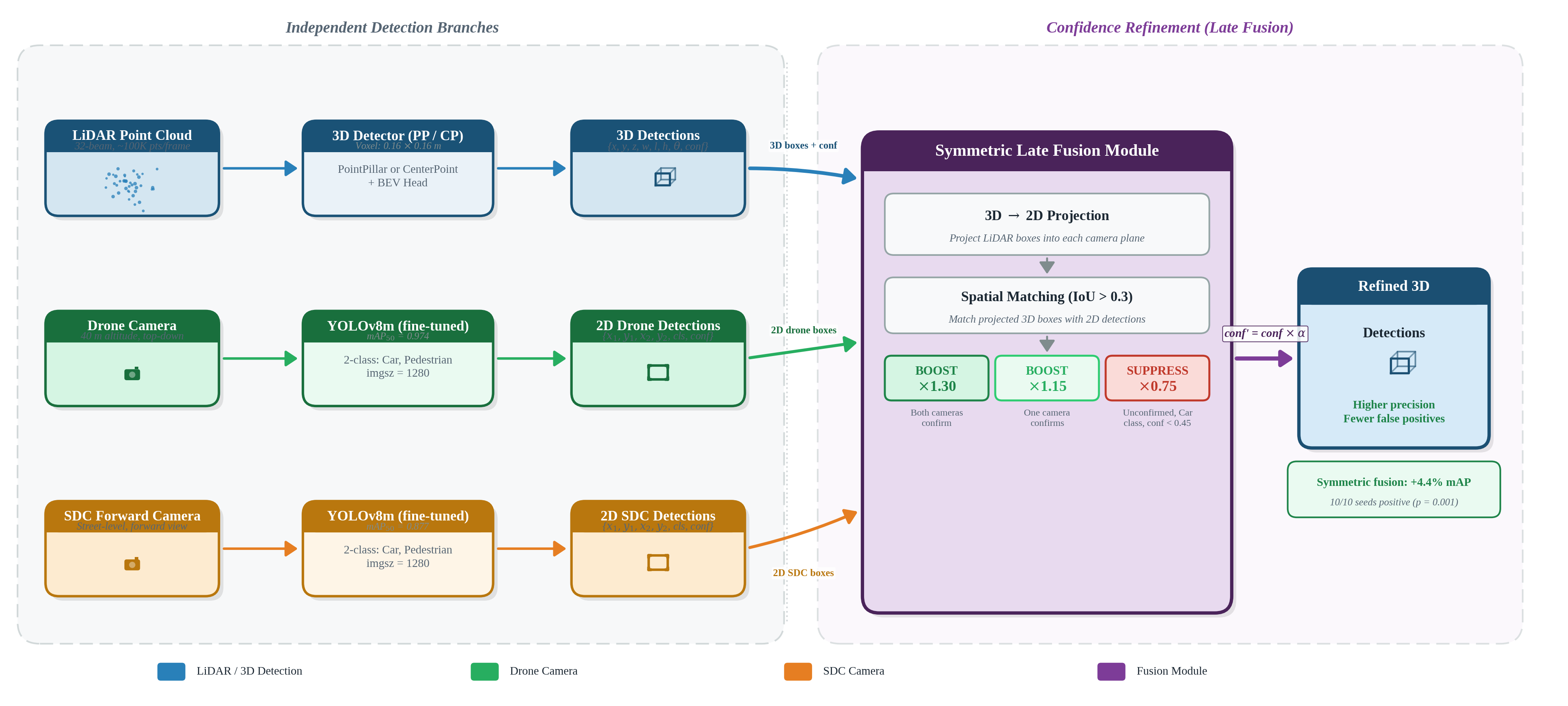

Three-Stage Pipeline

- 3D LiDAR Detection (PointPillar + CenterPoint) — Processes ego vehicle’s 64-channel LiDAR point cloud into 3D bounding boxes with class labels and confidence scores using two complementary architectures

- 2D Camera Detection (YOLOv8) — Two independent YOLOv8m models process drone (top-down, 40m) and forward camera images, fine-tuned on CARLA-rendered data

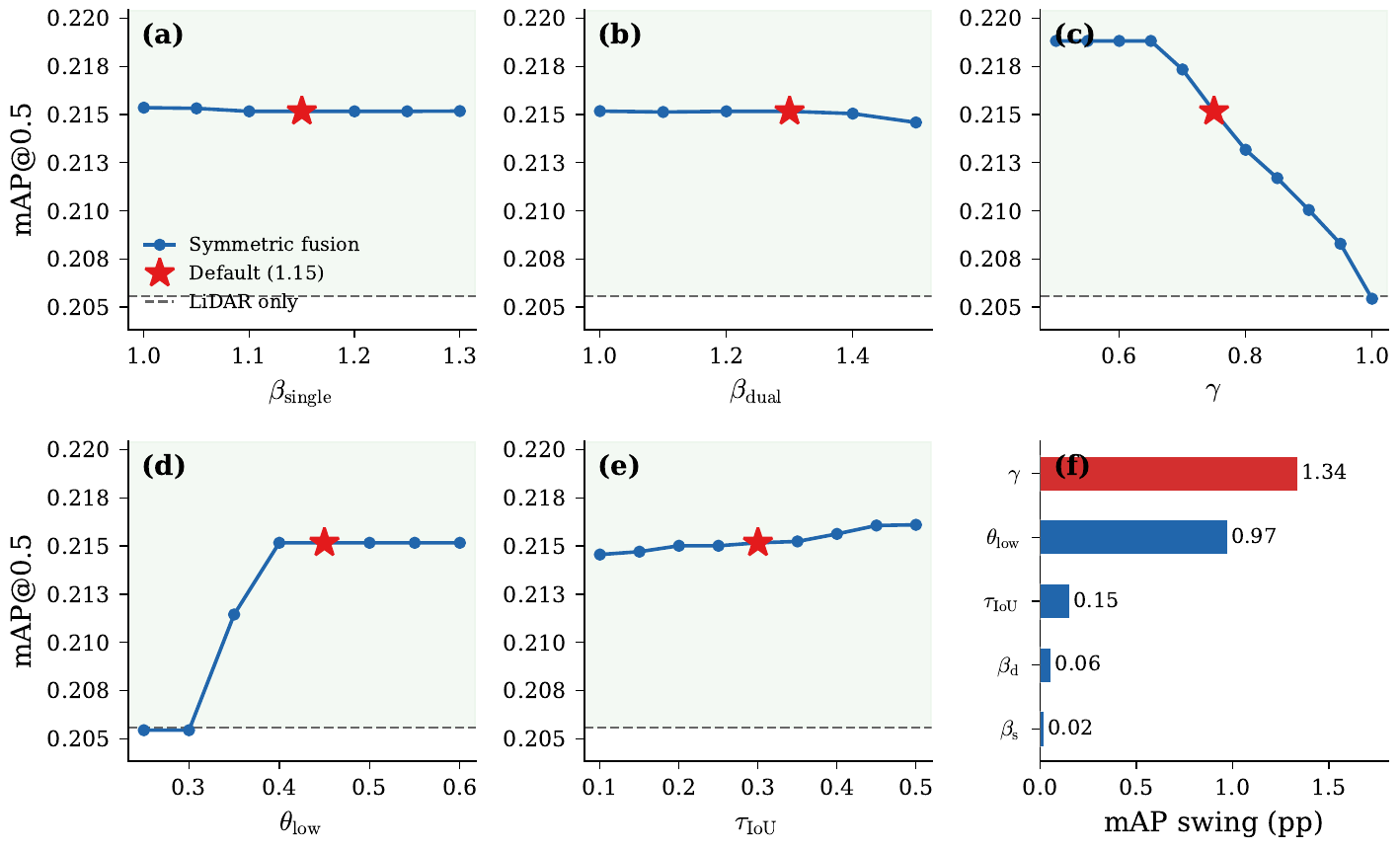

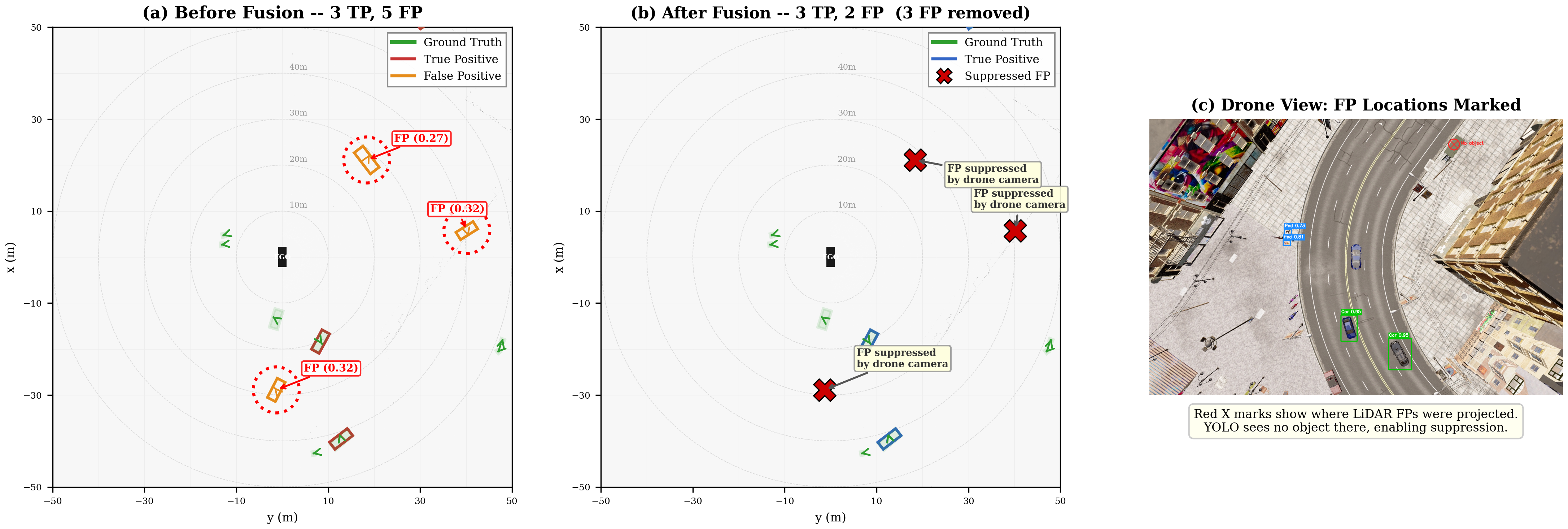

- Symmetric Late Fusion — Camera-confirmed LiDAR detections receive confidence boosts (×1.15 single-cam, ×1.30 dual-cam), while unconfirmed low-confidence detections are suppressed (×0.75). Both cameras apply boost and suppress operations uniformly

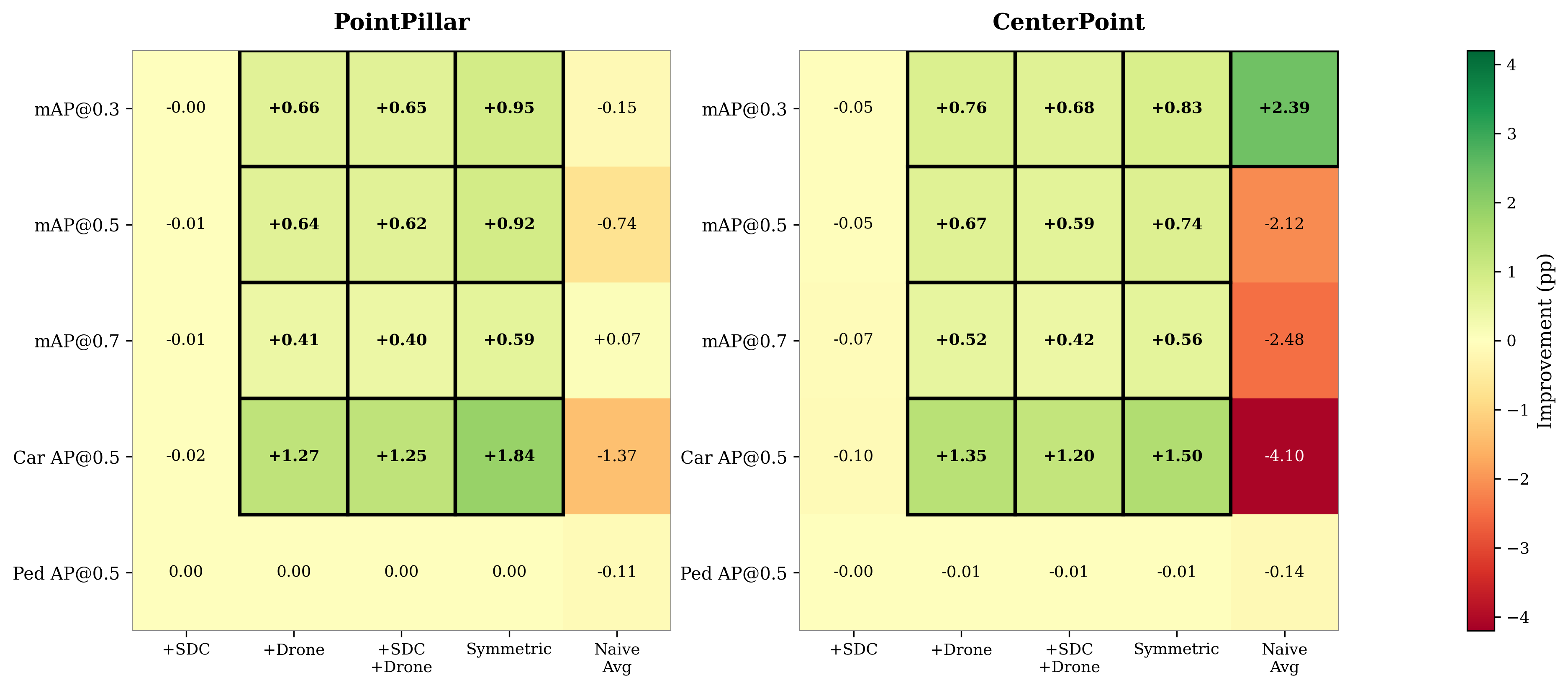

Key Finding: Symmetric > Asymmetric

Counter-intuitively, applying both boost and suppress operations to both cameras outperforms asymmetric designs where the forward camera is restricted to boost-only. The forward camera’s value lies entirely in its ability to suppress false positives, not in boosting true positives.

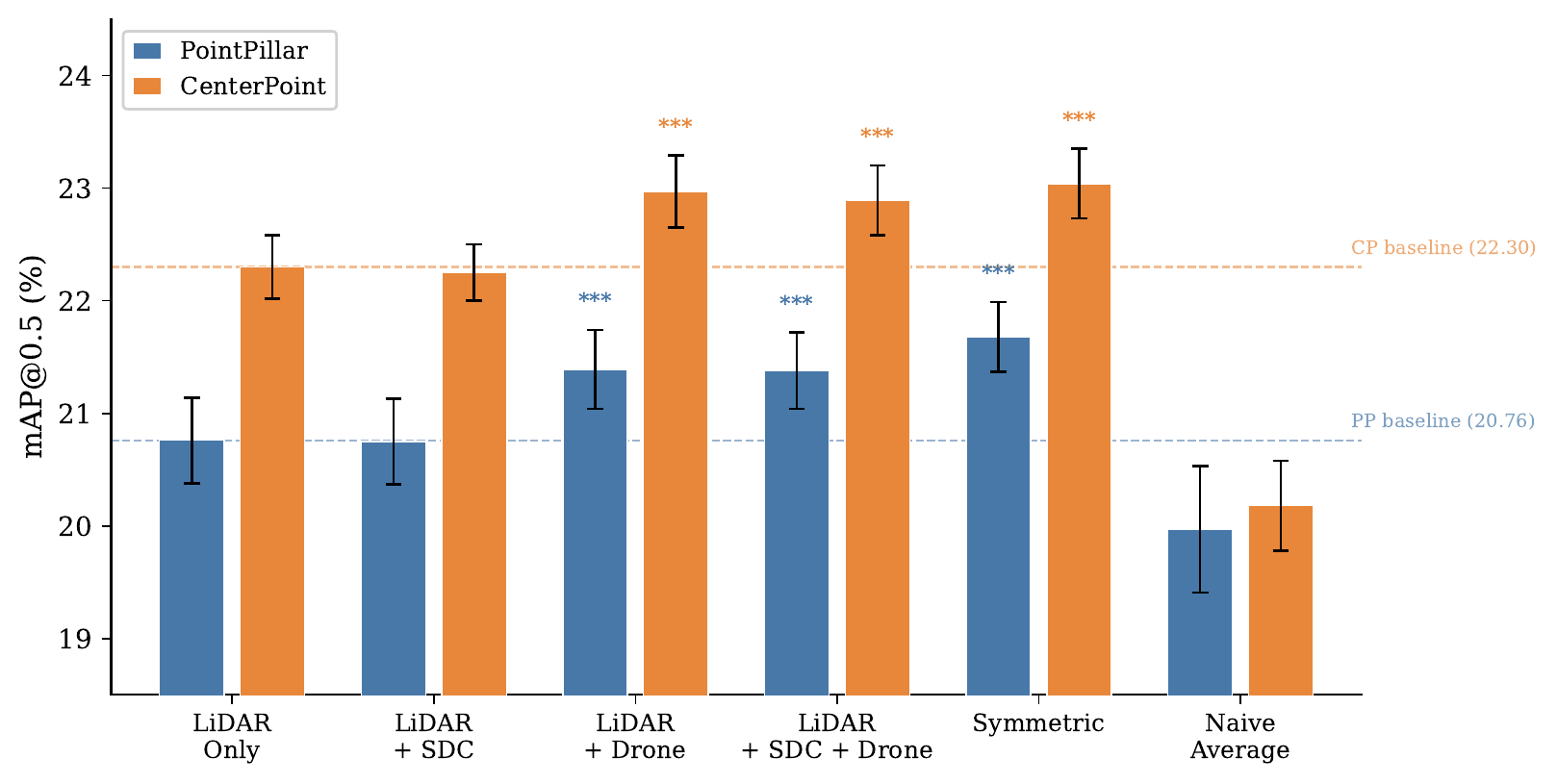

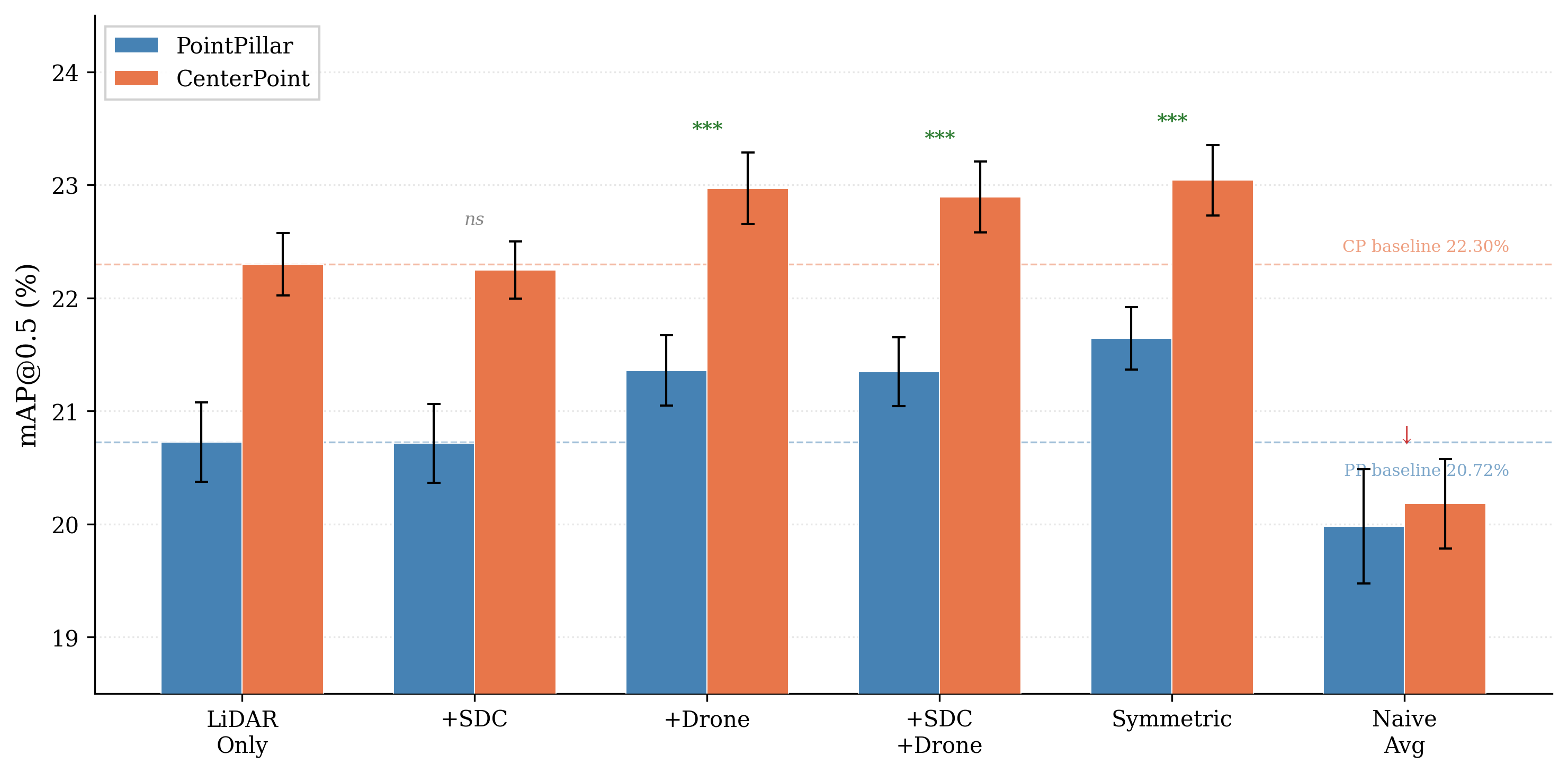

Results

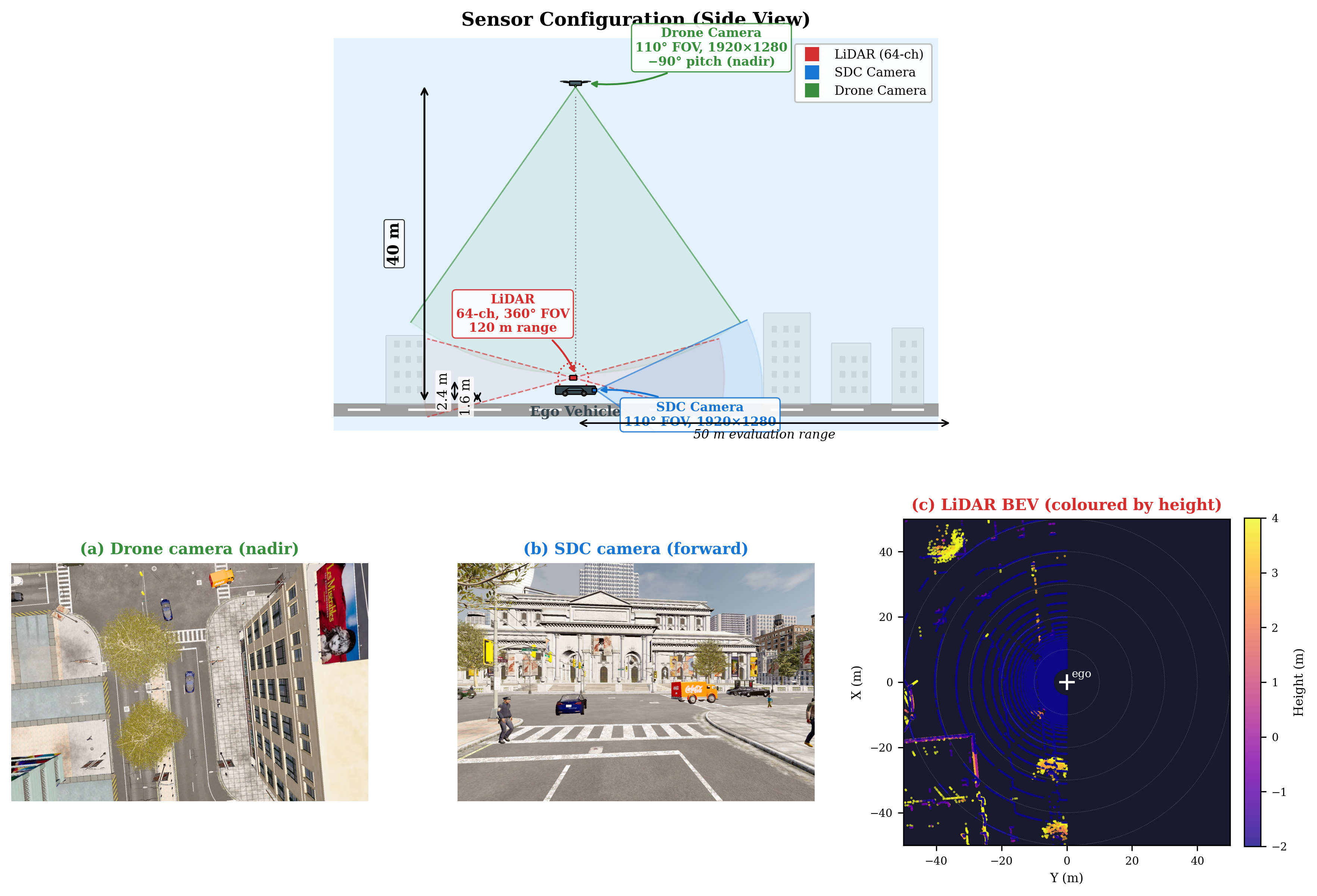

Evaluated on a CARLA Town10HD dataset with 2,600 frames, 35,837 annotations (Car + Pedestrian), and ten-seed repeated random sub-sampling validation.

PointPillar Results (10-seed average)

| Configuration | Car AP | Ped AP | mAP@0.5 | Δ | Significance |

|---|---|---|---|---|---|

| LiDAR-only | 38.50 | 2.69 | 20.76 ± 0.38 | — | — |

| + SDC (boost-only) | 38.50 | 2.69 | 20.75 ± 0.38 | -0.01 | n.s. |

| + Drone | 40.21 | 2.57 | 21.39 ± 0.35 | +0.63 | p = 0.001* |

| + Symmetric | 40.74 | 2.61 | 21.68 ± 0.31 | +0.92 | p = 0.001* |

CenterPoint Results (10-seed average)

| Configuration | Car AP | Ped AP | mAP@0.5 | Δ | Significance |

|---|---|---|---|---|---|

| LiDAR-only | 39.04 | 5.56 | 22.30 ± 0.28 | — | — |

| + SDC (boost-only) | 38.94 | 5.55 | 22.25 ± 0.25 | -0.05 | n.s. |

| + Drone | 40.39 | 5.55 | 22.97 ± 0.32 | +0.67 | p = 0.001* |

| + Symmetric | 40.54 | 5.54 | 23.04 ± 0.31 | +0.74 | p = 0.001* |

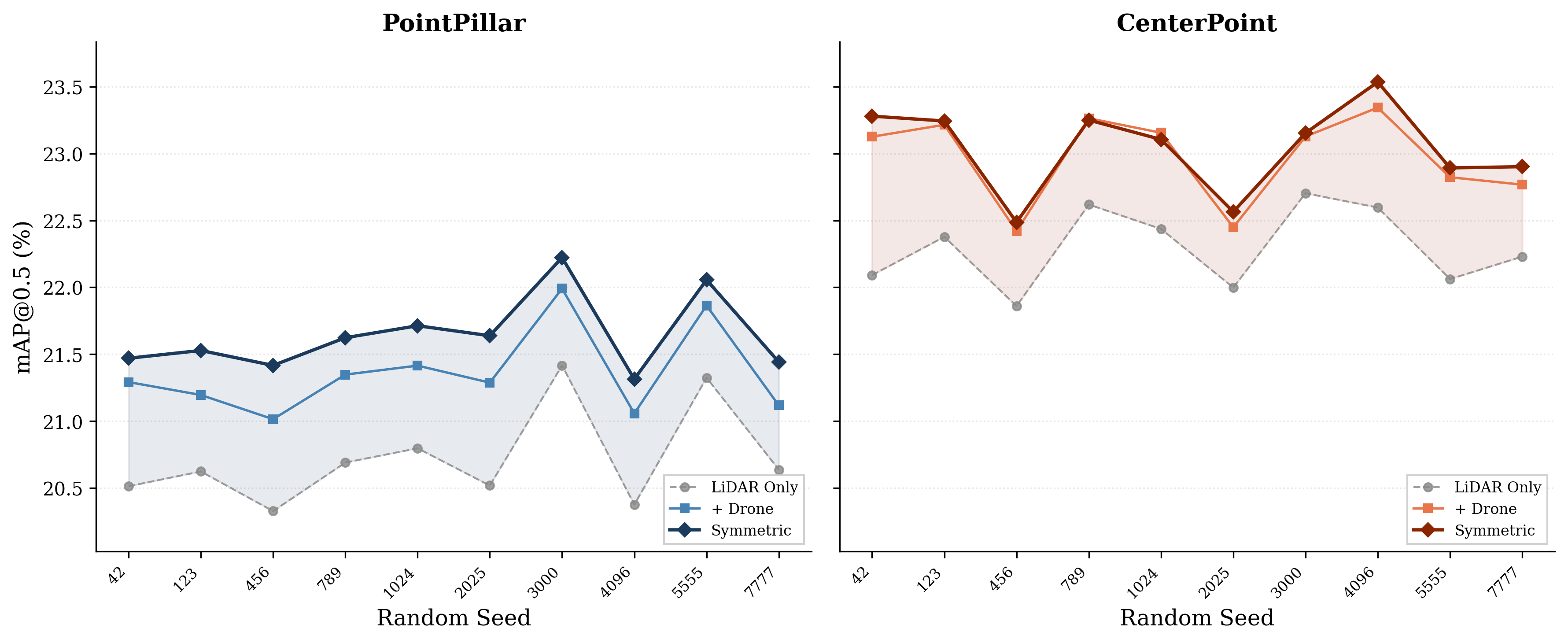

Per-Seed Consistency

Camera Contribution Ablation (PointPillar)

| Configuration | mAP@0.5 | Δ (pp) | Relative |

|---|---|---|---|

| LiDAR-only | 20.76 | — | — |

| + SDC camera (boost only) | 20.75 | -0.01 | n.s. |

| + Drone camera (symmetric) | 21.39 | +0.63 | +3.0% |

| + Both cameras (symmetric) | 21.68 | +0.92 | +4.4% |

The drone camera drives the majority of improvement, while the forward camera’s value lies in its ability to suppress false positives rather than boost true positives. Symmetric fusion outperforms asymmetric across both PointPillar and CenterPoint detectors, with all gains statistically significant (sign test p = 0.001, t-test p < 0.0001).

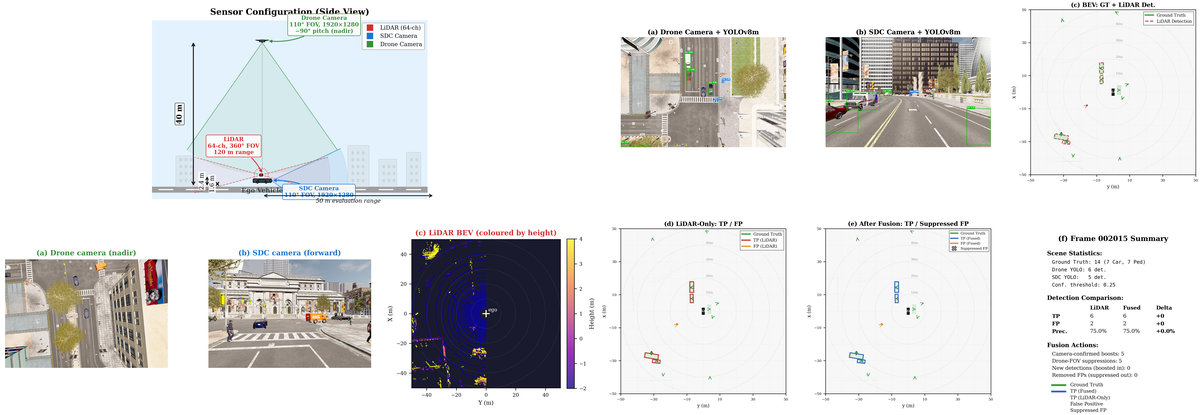

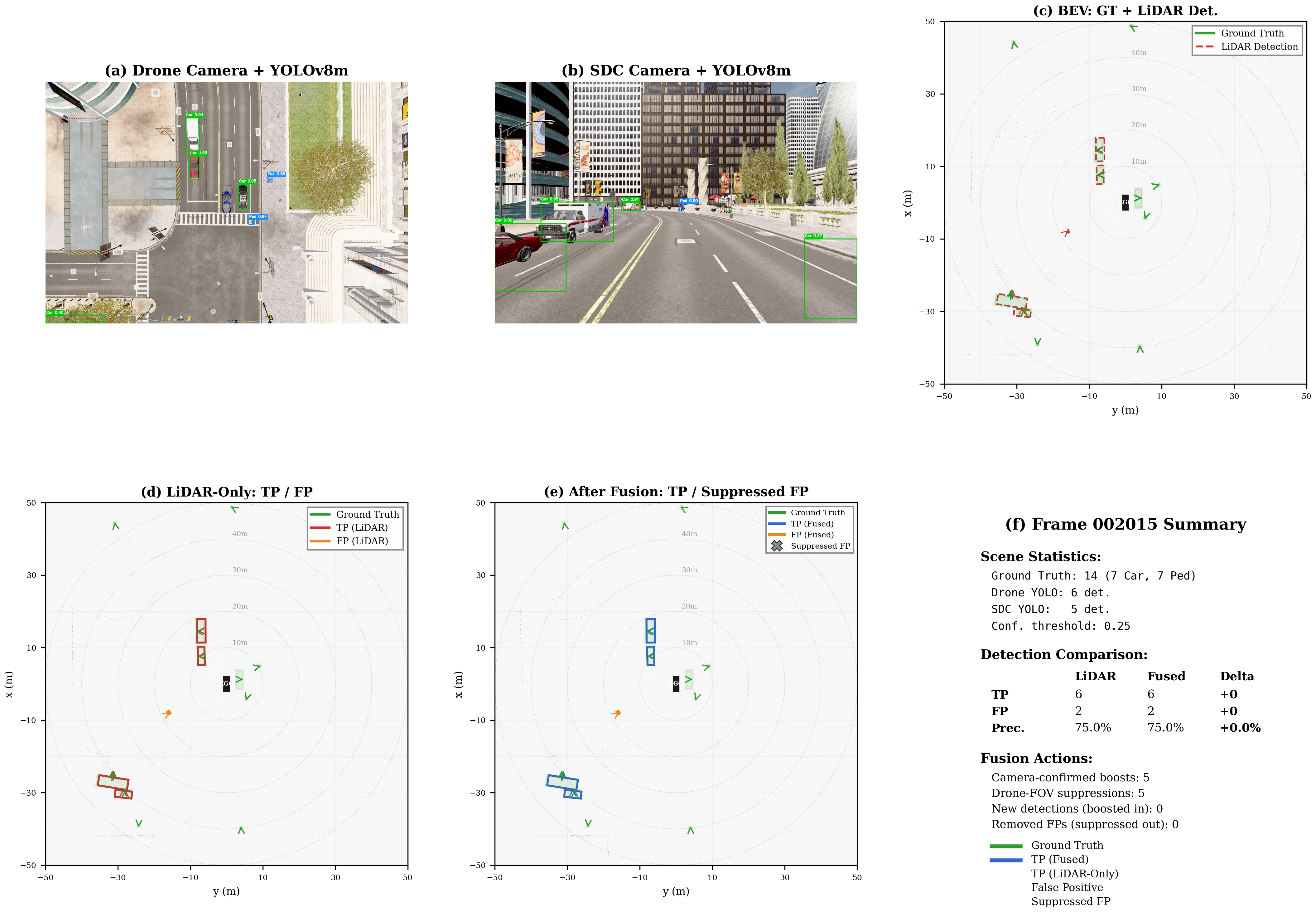

Qualitative Results

Multi-View Sample Pairs

Synchronized samples from all three sensors show how complementary viewpoints resolve ambiguities:

Video Demonstrations

These videos show the fusion system operating in real-time on CARLA Town10HD sequences.

Citation

@article{zhou2026bevfusion,

title={Dual-Camera LiDAR Fusion for Occlusion-Robust 3D Detection in Urban Driving Simulation},

author={Zhou, Xingnan and Alecsandru, Ciprian},

year={2026},

note={In Preparation}

}